Maybe AI is A-OK after all? A year after his initial study raised serious concerns about potentially buggy code being written by AI-assisted programs, Dr. Brendan Dolan-Gavitt now reports the risk differential between source code written by human programmers and code written with the help of large language models (LLMs) may not be “statistically significant.”

Dolan-Gavitt, an assistant professor of computer science and engineering at NYU Tandon, was part of a research team who published “Asleep at the Keyboard? Assessing the Security of GitHub Copilot’s Code Contributions.” That study found that about 40 percent of the code generated by Copilot, an AI pair programmer, included potentially exploitable weaknesses.

The changed perspective was sparked by a second study by Dolan-Gavitt and a number of other researchers from New York University. As explained by Dolan-Gavitt in an October 7, 2022 article in The Register, “The difference between the two papers is that ‘Asleep at the Keyboard’ was looking at fully automated code generation (no human in the loop), and we did not have human users to compare against.” The newer paper “tries to directly tackle those missing pieces, by having half of the users get assistance from Codex (the model that powers Copilot) and having the other half write the code themselves.”

Though the newer study seems to indicate AI-assisted code does not increase the vulnerability of programs, Dolan-Gavitt cautions,” I wouldn’t draw strong conclusions from this, particularly since it was a small study and the users were all students rather than professional developers.”

The new paper is coauthored by Drs. Gustavo Sandoval, Industry Professor of Computer Science and Engineering; Ramesh Karri, Professor of Electrical and Computer Engineering; Siddharth Garg, Institute Associate Professor; and Hammond Pearce, Research Assistant Professor at the Center for Cybersecurity. Teo Nys, a recent B.S. graduate now working as a software engineer at Kona, also contributed to the paper, which can be read here.

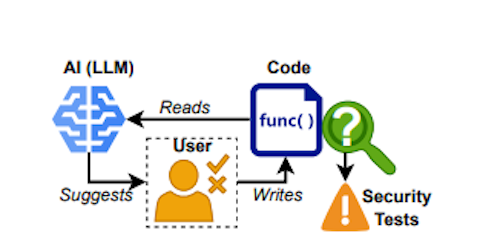

Diagram at the top comes from Security Implications of Large Language Model Code Assistants: A User Study, and illustrates the security impact of LLM assisted code.